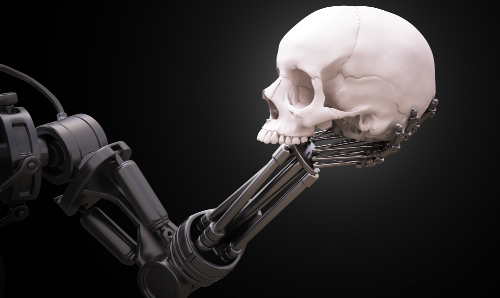

The United States Department of War is seeking to acquire “killer AI (artificial intelligence)”. This kind of AI doesn’t ask questions of humans. So the real question is, what does the Pentagon plan to do with this technology?

The Pentagon Seeks “Killer AI” Without Safeguards

We have previously reported on Claude, an AI system developed by the American company Anthropic. According to media reports, it was used by the U.S. military in planning the operation aimed at capturing Venezuelan President Nicolas Maduro. The use of AI in serious military planning is striking in itself. But the scandal that followed is far more revealing about the U.S. ruling class.

Anthropic is standing on its strict ideological position. It has made it abundantly clear that its AI systems are not intended for warfare or mass surveillance. These ethical restrictions are not marketing slogans; they are built directly into the architecture of the software so that the ruling class cannot force AI to be “immoral”. The company applies these limits internally and expects its clients to do the same.

The Department of War claimed that it used Claude in the operation in Venezuela without informing Anthropic of its intended purpose. When this became public, the company objected. The response from the U.S. military was terrifying. Instead of apologizing and agreeing to moral safeguards, Pentagon officials demanded access to a “clean” version of the AI. The ruling class wants to use AI that has been stripped of all moral and ethical constraints. The ruling class said that these constraints prevented them from “doing their job.”

While everyone else jumps for joy at rulers unable to do their job, it doesn’t go over well for those who wield the power to start wars.

Secretary of War Says U.S. “Must Prepare For War”

Anthropic has refused to give the ruling class an AI platform that would allow it to override the ethical constraints. In response, Secretary of War Pete Hegseth publicly complained that the Pentagon does not need neural networks “that can’t fight” and threatened to label the company a “supply chain threat.” This designation would effectively blacklist Anthropic, forcing any company working with the Pentagon to sever ties with it, accoridng to a report by RT.

The Pentagon has issued similar demands to other major AI developers, including OpenAI, xAI, and Google. Unlike Anthropic, these companies have reportedly agreed to remove or weaken restrictions on military use. This is where concern becomes alarm.

Now, what will the ruling class use AI for in the near future? What is this going to look like for those of us who don’t approve of being treated like tax cattle?

Read the full article here